The clock just reset, but the mission has never been clearer. On Thursday, March 26, 2026, the European Parliament voted overwhelmingly in favour of delaying the implementation of the EU AI Act for high-risk AI systems. This delay applies to systems involving biometrics, critical infrastructure, education, law enforcement, and essential services. The proposed compliance date for these systems is now December 2, 2027, marking a delay of more than a year from the initial date of August 2, 2026.

This decision follows sustained pressure from industry groups and the European Commission’s Digital Omnibus proposals, which sought to ease regulatory burdens and align implementation with the availability of harmonised technical standards. While many organisations are breathing a metaphorical sigh of relief, this is no signal to pause. In fact, a dangerous industry trend is emerging: organisations are spending this extra time doing the wrong thing faster.

The Compliance Trap: Auditing the Platform, Missing the Person

Most organisations approaching compliance are committing a fundamental category error. They are reviewing their AI platforms, checking vendor certifications, and pulling model cards. They ensure SOC2 or ISO 42001 certifications are in place and conclude the box is ticked. It is not.

The EU AI Act is not about regulating the technology behind AI systems, it is about regulating what that technology is allowed to do to its users – whether primary, secondary, or third parties who do not interact with the system but are directly or indirectly affected by its decisions. Organisations that frame their EU AI Act compliance journey around the technology are working on the wrong thing.

Use Case vs. Model: The Risk Decoupling

Fundamentally, the EU AI Act is a harms-to-persons framework. It cares about outputs, decisions, and impacts to relevant stakeholders. The Act does not prescribe technical guidelines for model architecture, weights, or APIs. Instead, the risk classification of unacceptable, high, limited, or minimal is entirely anchored to the use case and affected population, not the underlying model or platform.

Consider a GPT-4 model as a prime example. If that model is used for playlist recommendations, it is classified as minimal risk. However, if the exact same model is used to rank candidates for a job role, it is considered high risk. While the model did not change, the use did, and hence the classification. The compliance obligations and the penalties for getting it wrong thus follow the use, not the model.

The Right Frame: From Model Registry to Use Case Inventory

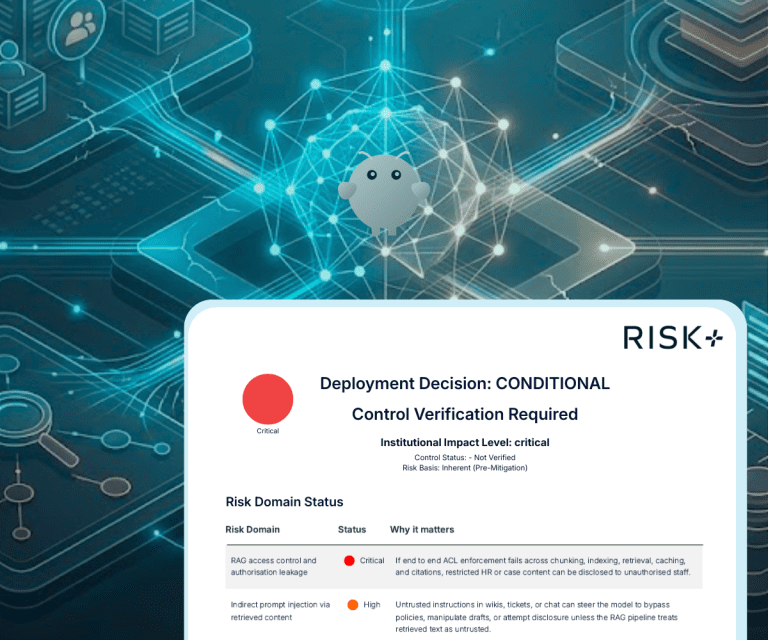

Compliance must be structured around the AI system as it is actually deployed. This requires a comprehensive mapping of the specific use cases and the population or stakeholders they affect. It also requires an assessment of the specific decisions the AI influences or automates.

Full governance demands a risk assessment for each of these deployments, with obligations assigned accordingly. By following a use case inventory rather than a model registry, the ownership of compliance shifts: it moves away from GRC and CTO offices toward the business units, legal teams, and functions closest to the people the AI affects.

The Question Regulators Will Ask

Organisations that anchor compliance to their platform will spend significant time and money producing technically impressive documentation that does not address what regulators will actually scrutinise. When auditors arrive, they will not ask what AI you use. Instead, they will ask what your AI does to people and what you did about it. Organisations should build their compliance programmes around that specific question.

With the recent announcement of the extension for compliance to December 2027 for high-risk AI systems, organisations have been afforded more time. But more time to do the right thing, not to do the wrong thing faster.

This is the approach we take at AIQURIS. We help organisations take a deep dive into their risk profile with a specific focus on the AI use cases being deployed. Our proprietary platforms, Impact+ and Risk+, focus on identifying the impacted stakeholders and assessing risk across six comprehensive domains including Legal, Security, and Ethics. We identify potential harms arising from the AI use case and recommend the exact actions needed to close the gaps.